|

3D Mask Face Anti-Spoofing (P C Yuen et al.)

Research on face recognition technology over the past three decades has mainly focused on achieving high recognition accuracy of facial images that contain variations, for instance, in illumination, pose, scale and expression. Many algorithms with excellent recognition performance have been developed, and face biometric systems have consequently been deployed in many practical applications in the past decade. Although system accuracy is an important factor for practical face biometric systems, security is also a concern. The system has to be able to reject illegal users who present an imitation or fake face of an enrolled user, which is referred to as a face spoofing attack.

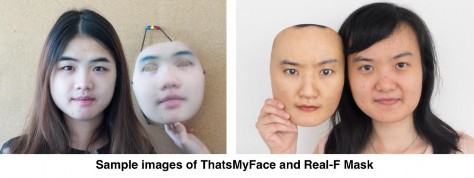

Due to the popularity of social networks, it is very easy to obtain biometric information on the face from the Internet. It is common for people to upload images/videos to share their daily activities with friends, and it is not difficult to obtain such images/videos from open sources. A face spoofing attack may use one of three approaches, namely displaying a printed photo, playing back a face video or wearing a 3D facial mask. Figure.1 Shows the 3 attacks respectively. Anti-spoofing algorithms that use the texture of the human face and multi-spectral analysis perform very well for printed photos and video replay attacks. Human computer interaction (HCI) methods, such as asking the user to blink his/her eyes or move his/her lips, have also been proposed for detecting live faces. It has recently become possible to fabricate low cost, hyper-real 3D colour masks off-the-shelf. As the colour and texture of such masks look real, they can be very difficult to distinguish from real faces. At the same time, simple HCI-based liveness detection may not work well. Figure. 2 shows sample images of 3D masks, including the hyper real one. Experimental results have also shown that state-of-the-art face anti-spoofing methods are not good enough to detect a mask attack, and a masked face can successfully spoof face recognition systems. Therefore, there is an urgent need to develop more powerful face anti-spoofing algorithms to prevent mask attacks.

Figure 1. Example images of three attack approaches, photo attack, video attack, and 3D mask attack.

Figure 2. Example images of 3D mask attack.

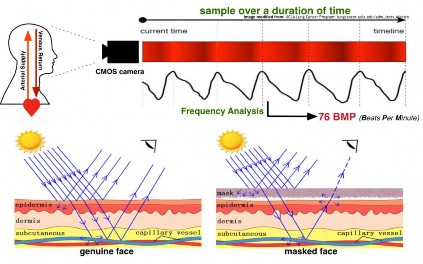

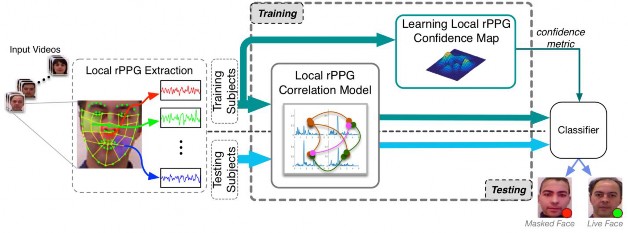

As even humans find it difficult to distinguish a hyper-real silicon masked face, image processing and analysis methods based on conventional face appearance may not be feasible. As such, this project proposes a new approach that tackles the problem in the most fundamental way, by analysing the blood volume flow in the living tissues of faces. To achieve this, we will use remote photoplethysmograph (rPPG) technology, an optical technique for detecting blood volume flow at a distance. The nature of rPPG means that it does not work on a masked (fake) face (please refer to Figure. 3 for details), which is a good property for this project. Figure. 3 shows the principle of rPPG and its effect on face anti-spoofing. However, rPPG is sensitive to illumination changes and head motion, which are the two typical variations that occur when recognising faces from videos. As such, we develop a novel local rPPG correlation model to extract discriminative local heartbeat signal patterns so that an imposter can better be detected regardless of the material and quality of the mask. To further exploit the characteristic of rPPG distribution on real faces, we learn a confidence map through heartbeat signal strength to weight local rPPG correlation pattern for classification.. Figure. 4 shows the overview the our proposed method.

Figure 3. Principle of rPPG and its effect on 3D mask face anti-spoofing.

Figure 4. Block diagram of the proposed method. Four main components are included: 1) local rPPG extraction, 2) local rPPG correlation modeling, 3) confidence map learning and 4) classification. From input face video, local rPPG signals are extracted from the local regions selected along landmarks. After that, the proposed local rPPG correlation model extract discriminative local heartbeat signal pattern through cross-correlation of input signals. In training stage, local rPPG confidence map is learned and transformed into metric to measure the local rPPG correlation pattern. Finally, local rPPG correlation pattern and confidence metric is fed into classifier.

References

S. Liu, P. C. Yuen, S. Zhang, and G. Zhao “3D Mask Face Anti-spoofing with Remote Photoplethysmography”, ECCV 2016

S. Liu, B. Yang, P. C. Yuen, and G. Zhao, “A 3D Mask Face Anti-spoofing Database with RealWorld Variations”, CVPRW'16, 2016

For further information on this research topic, please contact Prof. P C Yuen.

|